Kubernetes implements OIDC via arguments on the API Server such as –oidc-issuer-url, etc. This works great, however a common utility to access kubernetes in this way kubelogin isn’t so great in that an open web browser closed in the wrong way can cause the authentication to fail. So what’s a better solution?

Pinniped allows the configuring of OIDC and login on a running kubernetes cluster. This is great in that you don’t have to restart the cluster to get OIDC to work, and sometimes you just don’t have access to configure OIDC via the API Server.

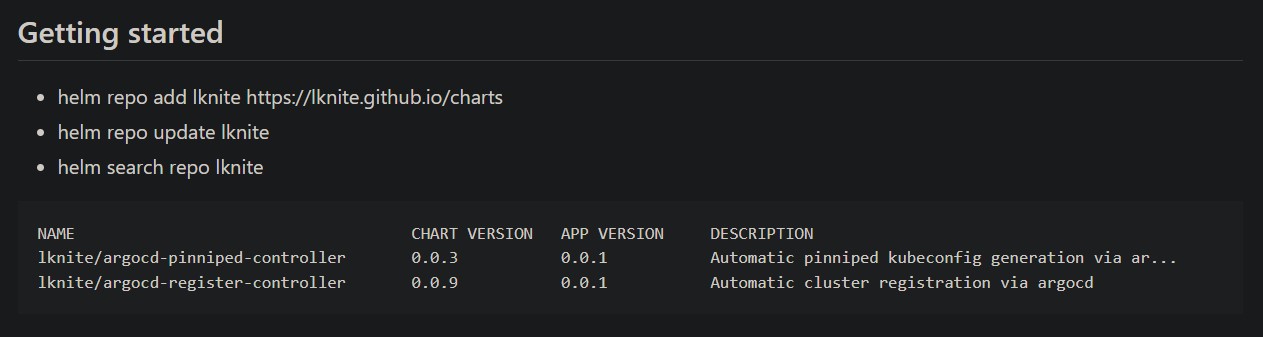

Pinniped can be configured and deployed in the usual way that you deploy addons to your clusters. (see argocd – getting started, and argocd – getting started – addons)

Whether using the native OIDC solution or using pinniped, a kubeconfig must be created which can be used with kubectl to access the clusters. Pinniped has a utility to create the kubeconfig, but how to get to the end user? There have been various methods to accomplish this:

- the user submits a ticket, through automation you send them the pinniped kubeconfig via email

- all pinniped kubeconfig files are made available via a git repo all clients have access to

- (here’s our idea) make the pinniped kubeconfig available via a website, so we don’t need to grant git repo access in order to deliver the kubeconfig

My strategy has been to use argocd, applicationsets, and a single container running on all clusters as a means to distribute the pinniped kubeconfig to all interested parties.

* Note: pinniped kubeconfigs contain no secrets, they do not need to be treated as secrets, other than as related to a potential denial of service attack, because the user will still need to login and access is granted via groups.

Here’s the implementation:

- argocd applicationsets allow the use cluster name to be used as a variable, this means we can define the url we want in each cluster to be: https://pinniped.<cluster>.<domain>

- the applicationset deploys a container running php which uses a script to determine the clustername. The php site displays some information to help the user get started with pinniped and a link to the pinniped kubeconfig

- a configmap is mounted using a volume to the container and contains all pinniped kubeconfig files, this works because the url generated in the previous step includes the name of the cluster and so points to the correct pinniped kubeconfig

- because this solution is deployed via argocd, when a new cluster is deployed and a pinniped kubeconfig is created & added to a git repo, a script re-generates the configmap from the git repo, all pinniped websites are updated automatically, we just have to add a step to update the pinniped configmap as part of the new cluster deployment process

(video: todo)