Category Archives: Infrastructure

Making learning fun by creating a “gray area” niche goal

One time, as a way to motivate a coworker who was working to learn scripting, I shared with him an algorithm to generate all possible string combinations given a string of possible characters. This, of course could be used potentially to try all possible passwords for nefarious means.

Within about 10 minutes I had a manager in the office, looking at the whiteboard, telling me they weren’t stupid, that they knew what all that was on the board and that I need to not be teaching the employees how to hack computers. I noticed a coworker sneaking out of the room trying to hide their laughter. I think we know who called the manager.

It still makes me laugh to this day. I suppose they weren’t completely wrong, but in my mind I figure if you tell someone to make every possible key possible for a type of car, and try them all, at some point you’ll get into that car. Is that teaching a master class on how to break into cars? I guess, but it sure isn’t efficient, master class it is not. However, as a fun script for someone new to scripting to use to learn with, it’s a fun algorithm to write.

Along those same “gray area” lines I share with you a goal to help motivate one to learn kubernetes. I’ll give you the exact steps necessary to make it happen. The goal is this: “Let’s put together a micro-services architected solution to help you keep track of movies or tv shows you’d like to get around to watching some day”. I used to use a physical notepad for this back in the day, then a notepad app on my phone, but modern days allow for modern solutions. No longer do you have to type the full name of a movie or tv show, you can now type a little and search for it, then when its found click on it to add it to your queue.

Here’s how to do it and the applications you’ll need (this is for an onprem setup, you are own your own if you are deploying apps the world is able to see from the internet, try not to do that):

- If you don’t know kubernetes sign up for the udemy class “Certified Kubernetes Administrator (CKA) with Practice Tests”, then go ahead and take the exam and get the CKA certification.

- Decide which extra computer you have laying around is to be your NAS, where your network storage will live, and install truenas core on it. Then configure iscsi to work with kubernetes provisioned storage. You’ll need to deploy democratic-csi into your cluster and install scsi-related utilities on to your worker nodes in the next step.

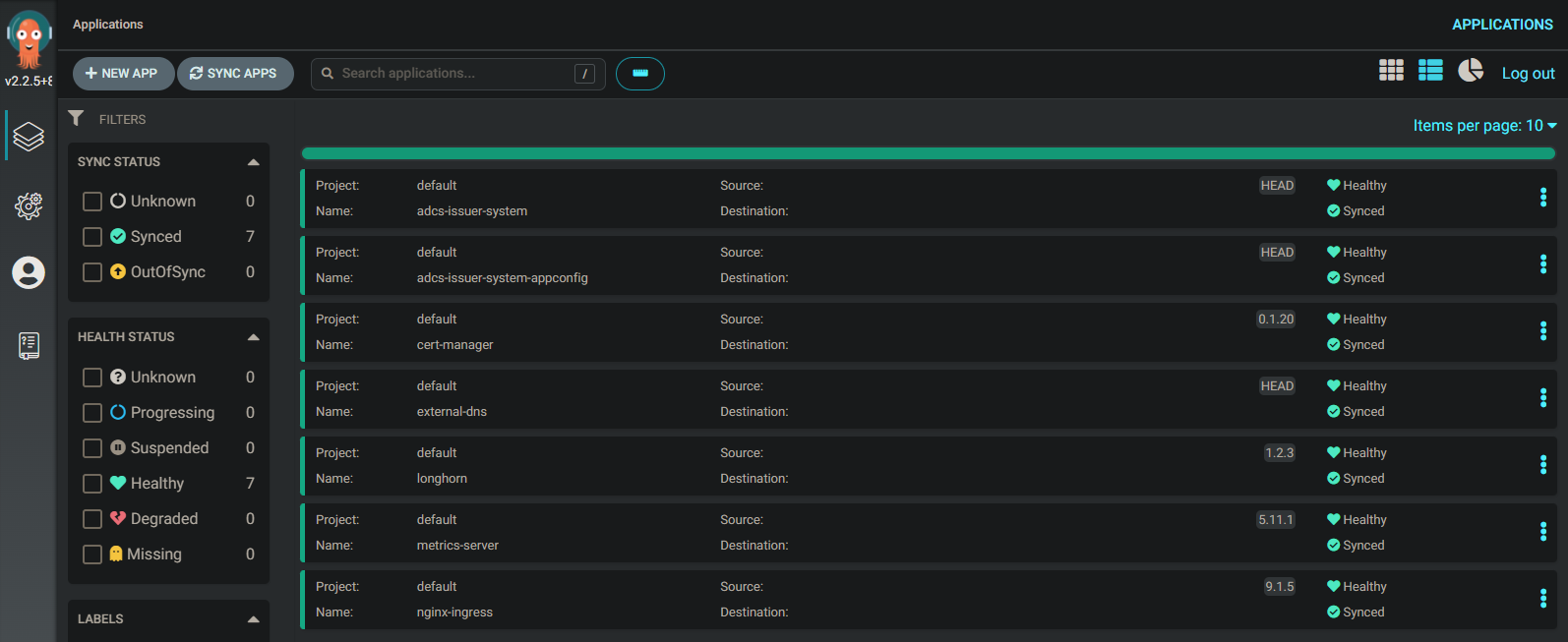

- Spin up a cluster using kubeadm then deploy Plex Media Server into your cluster. Plex is used to give a netflix-like experience around viewing your home media collection. I recommend the kube-plex helm chart and to deploy using gitops using argocd. kube-plex will deploy a pms instance that will spin up extra streaming processes on the fly, but I recommend disabling that feature in the values.yaml, to avoid any potential file locking issues… at least in the beginning. Also, instead of using a network share for your configuration setup taints and tolerances to direct the plex application to only spin up on one node, and use local storage there. (Plex tends to have database corruption when using network storage, go for it later if you want, but set yourself up for success initially.)

- Next deploy sonarr and radarr using the helm charts out at k8s-at-home, these are similar apps that work with tv and movies respectively. k8s-at-home is a cool group in that they have a common library among all their helm charts, so for example, if you wanted to setup a vpn on any particular application as a side pod you could, or using a single vpn pod that multiple pods can route through, or add an ingress, or mount an additional volume, etc.

- Now, you are done, you can browse to your sonarr and radarr installation and search for movies and tv shows you’d like to watch someday. Be sure to setup cert-manager and external-dns as well to register them in your local onprem dns and configure a valid certificate.

- You might also want to setup a vpn such as wireguard in your cluster as well as forwarding the needed vpn-related port through your router so that you can browse to these apps on your phone while you are out and about, that way, if you hear of a movie you want to queue up for later viewing you can do so in the moment.

- Interestingly, you can also click on Connect in both sonarr and radarr to configure your plex server for some reason.

mapping dns via argocd applicationset and external-dns

When using ArgoCD and an ApplicationSet to deploy external-dns to all clusters, as part of a grouping of addons common to all clusters, it can be useful to configure the DNS filter using variables:

helm:

releaseName: "external-dns"

parameters:

- name: external-dns.domainFilters

value: "{ {{name}}.k.home.net }"

- name: external-dns.txtOwnerId

value: '{{name}}'

- name: external-dns.rfc2136.zone

value: '{{name}}.k.home.net'

This will place the cluster name as part of the dns name used by external-dns, resulting in the following type of FQDNs used by clusters:

app.dev.k.home.net

app.test.k.home.net

app.prod.k.home.netThough, for my core cluster with components used by all clusters, I like to leave out the cluster name so all core components are at the k.<domain> level:

argocd.k.home.net

harbor.k.home.net

keycloak.k.home.netgit-based homedir folder structure (and git repos) using lessons learned

After reinstalling everything including my main linux workbench system it became the right time to finally get my home directory into git. Taking all lessons learned up till this point it seemed a good idea to cleanup my git repo strategy as well. The revised strategy:

[Git repos]

Personal:

- workbench-<user>

Team (i for infrastructure):

- i-ansible

- i-jenkins (needed ?)

- i-kubernetes (needed?)

- i-terraform

- i-tanzu

Project related: (source code)

- p-lido (use tagging dev/test/prod)

doc

src

Jenkins project pipelines:

- j-lifecycle-cluster-decommission

- j-lifecycle-cluster-deploy

- j-lifecycle-cluster-update

- j-lido-dev

- j-lido-test

- j-lido-prod

Cluster app deployments:

- k-core

- k-dev

- k-exp

- k-prod

[Folder structure]

i-ansible (git repo)

doc

bin

plays ( ~/a )

i-jenkins (git repo) (needed ?)

doc

bin

pipelines ( ~/j )

i-kubernetes (git repo) (needed ?)

doc

bin

manage ( ~/k )

templates

i-terraform (git repo)

doc

bin

plans (~/p)

k-dev

i-tanzu (git repo)

doc

bin

application.yaml (-> appofapps)

apps (~/t)

appofapps/ (inc all clusters)

k-dev/cluster.yaml

src

<gitrepo>/<user> (~/mysrc) (these are each git repos)

<gitrepo>/<team> (~/s) (these are each git repos)

j-lifecycle-cluster-decommission

j-lifecycle-cluster-deploy

- deploy cluster

- create git repo

- create adgroups

- register with argocd global

j-lifecycle-cluster-update

j-lido-dev

j-lido-test

j-lido-prod

k-dev

application.yaml (-> appofapps)

apps

appofapps/ (inc all apps)

k-exp

application.yaml (-> appofapps)

apps

appofapps/ (inc all apps)

k-prod

application.yaml (-> appofapps)

apps

appofapps/ (inc all apps)

workbench-<user> (git repo)

doc

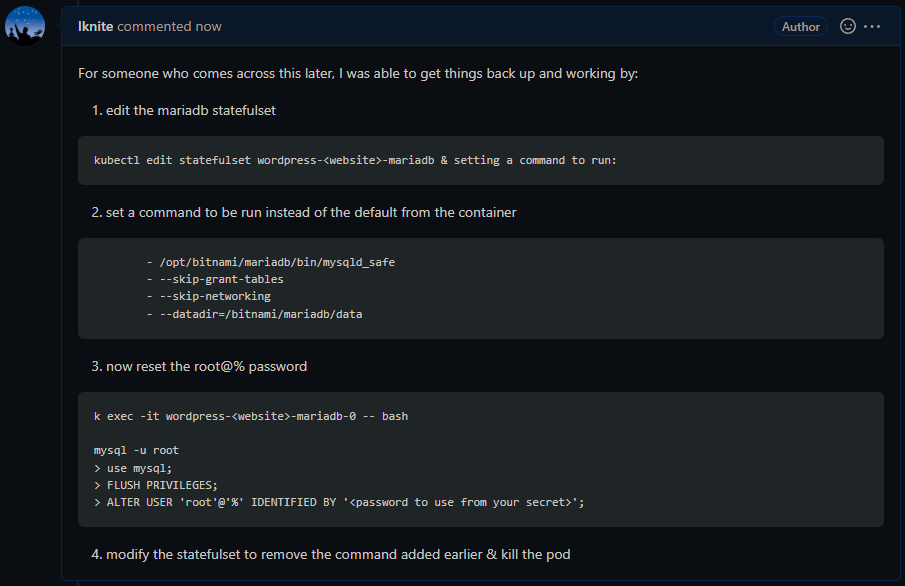

binkubernetes disaster recovery

Deploying via git using argocd or flux makes disaster recovery fairly straightforward.

Using gitops means you can delete a kubernetes cluster, spin up a new one, and have everything deployed back out in minutes. But what about recovering the pvcs used before?

If you are using an infrastructure which implements csi, then you are able to allocate pvcs using storage managed outside of the cluster. And, it turns out, reattaching to those pvcs is possible but you have to plan ahead.

Instead of writing yaml to spin up a pvc automatically, create the pv and pvc using manually set values. Or spin up the pvcs automatically and then go back and modify the yaml to set recoverable values. The howto is right up top in the csi documentation: https://kubernetes.io/blog/2019/01/15/container-storage-interface-ga/

Similarly, it is common for applications to spin up with randomly set admin passwords and such. However, imagine a recovery scenario where a new cluster is stood up, you don’t want a new password stood up. Use a vault with a password and reference the vault.

These two steps do add a little work, it’s the idea of taking a little more time to do things right, and in a production environment you want this.

Infrastructure side solution: https://velero.io/

Todo: Create a video deleting a cluster and recovering all apps with a new cluster, recovering pvcs also (without any extra work on the recovery side).

Kubernetes utilities

So many utilities out there to explore:

https://collabnix.github.io/kubetools/

Love how every k8s-at-home helm chart can pipe an application via a VPN sidecar through a few lines of yaml. And, said sidecar can have its own VPN connection or use a single gateway pod with the VPN connection, so cool!

The bitnami team also has a great standard among their helm charts, for example, consistent ways to specify a local repo and ingresses. Many works in progress.

Took at look at tor solutions just for fun, several proxies in kubernetes… as well as solutions ready to setup a server via an onion link using a tor kubernetes controller. Think I’ll give it a try.

This kube rabbit hole is so much fun!

xcp-ng & kubernetes

Decided to test out xcp-ng as my underlying infrastructure to setup kubernetes clusters.

Initially xcp-ng, the open source implementation of Citrix’s XenServer, appears very similar to vmware yet the similarities disappear quickly. VMware implemented the csi and cpi apis used by kubernetes integrations early on. These implementations are only becoming more evolved whereas xcp-ng is looking for volunteers to begin the implementations. Why start from scratch when vmware already has a developed solution?

What about a utility to spin up a cluster? Google, AWS, and VMware all have a cli to spin up and work with clusters… xcp-ng not so much, would need to use a third party solution such as terraform.

xcp-ng is great on a budget but lacks the apis needed for a fully integrated kubernetes solution. Clusters, and their storage solutions must be built and maintained manually. At this point I wonder how anyone could choose a Citrix XenServer solution knowing everything is headed towards kubernetes. But for a free solution with two or three manually managed clusters, yes.